Artificial Intelligence (AI) is developing exponentially fast. It is going to revolutionize most industries by offering a level of automation and precision no human could ever achieve. This means lots of new opportunities and applications we’ve never even thought of.

🎨 For instance, did you know an AI art generator won an art competition full of talented artists?

Even though not everyone needs to worry or care about AI, it’s worthwhile to learn some basic concepts and words associated with this field of computer science.

This is a beginner-friendly glossary of popular AI terms and concepts. Most terms on this list are basic AI jargon you might hear in news, at dinner tables, or at work. Make sure to keep up with the conversations by reading these terms!

- Make sure to also read my complete guide to Artificial Intelligence!

Anyway, let’s jump into the list of AI words!

1. AI

Artificial Intelligence or AI mimics the human intelligence process by using computer programs.

The idea of an AI program is that it learns similarly to humans. For example, an AI can drive a car, recognize images, detect images, and much more.

AI has become one of the 21st-century buzzwords. Notice that the term Artificial Intelligence is nothing but maths and probabilities under the hood.

You commonly hear people saying AI doesn’t use code. But this is far from the truth. All AI programs are written by data scientists and software developers using coding languages, such as Python. But the programs are crafted in a way that they are capable of making decisions on their own. So the actions taken by AI software are not hard-coded into the system.

Conceptually, artificial intelligence isn’t new. The first neural network propositions date back to 1940. But those days the computational power wasn’t there, as you might imagine. In the 21st century, advancements in technology have made it possible to experiment with propositions using powerful computers. This is why the field of AI is developing so quickly only now after 80 years of being a concept.

2. API

API stands for an application programming interface via which developers can access data and pre-made code solutions.

In the artificial intelligence space, many companies and startups use APIs via which they can access third-party AI solutions. This can make building impressive AI applications super easy.

The concept of APIs explains why all of a sudden startups and individual developers seem to have some kind of AI superpowers.

This is why APIs are awesome. Instead of spending millions and millions in research, anyone can start an AI business with little to no cost.

For example, most of the impressive AI writing tools use OpenAI’s GPT-3 language under the hood via an API. When doing this, a company is just an intermediary between a customer and the AI provided by a third party.

These days, anyone with little technical skills can build impressive AI software over a weekend using the right type of API.

3. Big Data

Big data refers to large data sets that are too huge to be processed in traditional data processing manner. Big data is a mix of structured, semi-structured, and unstructured data that organizations collect. Typically, businesses use big data to mine information from data to make better business decisions.

One example of using big data is machine learning and artificial intelligence. Artificial intelligence solutions rely on data. To train a powerful AI model, you need to run it through lots of data.

Big data is typically characterized by three V’s:

- Volume. Big data consists of large volumes of data in multiple environments.

- Variety. Big data systems store a broad variety of data.

- Velocity. The velocity describes the rate at which data is generated, collected, and processed.

4. Chatbot

Chatbots or AI chatbots are a popular application of artificial intelligence. Many businesses use AI chatbots to streamline customer support services.

One common example of chatbots is when visiting a web page and a chat box opens up. If you type something, you’ll get an immediate response. This response is not written by a human, but by an AI chatbot.

An AI chatbot uses natural language processing techniques, such as sentiment analysis to extract information about the messages it receives. Then it uses a natural language processing model to produce an output that it predicts will best serve the recipient.

Chatbots are a central application of AI. They are getting better and better quickly as AI develops. These days it’s sometimes impossible to tell whether you are talking to a bot or to a human.

5. Classification

In machine learning, classification means a modeling problem where the machine learning model predicts a class label for some input data.

A great example of classification is spam filtering. Given an input message, the task of the machine learning model is to classify whether the message is spam or not.

Another example of classification is recognizing handwritten images. Given an image of a character, the machine learning model tries to classify the letter it sees.

To build a successful classifier, you need a big dataset with lots of examples of inputs and outputs via which the model can learn to predict the outcomes.

For example, to build a handwritten character recognizer, the model needs to see a vast array of examples of handwritten characters.

6. Composite AI

Composite AI refers to a combination of AI techniques for accomplishing the best results.

Remember, AI is a broad term that governs subfields like machine learning, natural language processing, deep learning, neural networks, and more.

Sometimes the solutions aren’t achievable by using a single technique. This is why sometimes the AI solutions are built using a composite structure where multiple subfields of AI are combined to get the results.

7. Computer Vision

Computer vision is one of the main subfields of artificial intelligence. A computer vision program uses image data to train a computer to “see” the visual world.

To make computer vision work, the program has to analyze and learn from digital images and videos using deep learning models. Based on the data, a computer vision program can classify objects and make decisions.

You might be surprised that the earliest experiments with computer vision AI were made back in the 1950s. During this time, the first neural networks were used to determine the edges of objects in images to categorize them into circles and squares. During the 1970s, a computer interpreted handwritten text with optical character recognition.

These four factors have made computer vision thrive:

- Mobile devices with built-in cameras.

- Increase in computational power.

- Computer-vision-focused hardware.

- New computer vision algorithms like CNN networks.

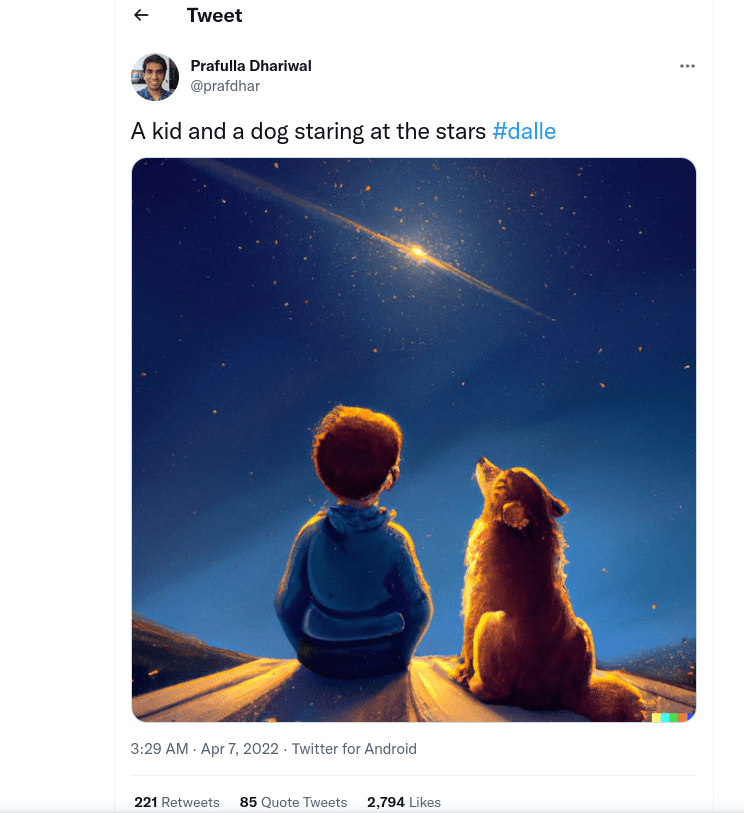

8. DALL-E 2

DALL-E 2 is an impressive text-to-image algorithm that has gained massive attention in the recent past.

To put it short, DALL-E 2 works such that it takes a text input and outputs an image that describes the input. At the time of writing, DALL-E 2 isn’t publicly available. Instead, it has a waiting list with tens of thousands of accepted participants.

On a very high level, DALL-E 2 is just a function that maps text to images. But under the hood, it’s a lot more than that.

Unlike previous attempts in generating images from text, DALL-E 2 takes it to the next level. I recommend checking some of the pictures it has been able to produce. For instance, here is a Tweet from one of the creators of DALL-E 2:

DALL-E 2 uses artificial intelligence to convert text to images. To produce images, DALL-E 2 uses two techniques:

- Natural language processing for analyzing the intent of the input text.

- Computer vision creation for outputting an image that best matches the intent.

9. Data Mining

Data mining refers to finding patterns in data to predict outcomes. In other words, it’s the process of turning raw data into useful and actionable information.

Data mining involves lots of techniques businesses can use to:

- Cut costs

- Reduce risks

- Increase profit

- Enhance customer relationships

And more.

Data mining is also called “knowledge discovery in databases”, which is a lengthier yet more descriptive naming for the action. It’s a process of figuring out hidden connections in data and predicting the future with them.

The data mining process roughly follows these five steps:

- Define business objectives. This is the phase of identifying the business problem and asking a lot of questions. During this phase, analysts sometimes have to conduct some research to better understand the business context.

- Data preparation. When the problem is defined, data scientists determine the data set that helps answer the questions of the problem. During this phase, the data is cleaned up so that for example duplicates, missing values, and outliers are removed.

- Data analysis. Data scientists try to look for relationships in the data, such as correlations, association rules, or other types of patterns. This is the phase that drives toward drawing conclusions and making decisions based on the data.

- Result evaluation. After aggregating the data, it’s time to evaluate and interpret the results. During this phase, the results are finalized in an understandable and actionable format. With the results, companies can come up with new strategies and solutions to achieve their objectives.

10. Data Science

Data science is a broad study of vast volumes of data. A data scientist uses modern tools, techniques, and algorithms to:

- Find unseen patterns

- Discover insightful information

- Make better business decisions

Data scientists use machine learning algorithms to create predictive models to extract information from the data.

The data being analyzed can come from different sources and have a variety of different formats. The data can be structured or unstructured.

Sometimes the data is already found in a data storage or database.

Usually, the data also needs to be obtained from somewhere, such as by scraping the web.

Here are some key concepts related to data science:

- Machine Learning

- Modeling

- Statistics

- Coding

- Databases

Before learning data science, you should be familiar with these concepts and know how to use them.

11. Deep Learning

A computer doesn’t understand what is learning as we humans do. To make a computer learn, it has to mimic the human-intelligence process of learning. This is where deep learning is used.

Deep learning is a machine learning subfield. Deep learning algorithms are used to teach computers how to learn by example.

A famous deep learning application is self-driving cars.

The deep learning algorithms that run under the hood can are taught to recognize objects on the road in real-time. These objects include:

- Road signs

- Pedestrians

- Traffic lights

- Traffic lanes

- Other vehicles

Also, the voice control services are a result of clever deep-learning algorithms.

To make a deep learning algorithm work, you have to feed it a lot of labeled training data. The algorithm uses the data to train itself to recognize patterns.

For instance, with enough training data, you can teach a deep learning algorithm to recognize objects from images.

12. Ethical AI

Ethical AI refers to AI that refers to ethical guidelines related to fundamental values, like:

- Individual rights

- Privacy

- Non-discrimination

- Non-manipulation

The idea of ethical AI is to place importance on ethical aspects in specifying what is legitimate/illegitimate use of AI. The organizations that use ethical AI have stated policies and review processes to make sure the AI follows the ethical guidelines.

Ethical AI does not only consider things permissible by law but also thinks one step further. Another way to put it is if something is legal, it doesn’t mean it’s ethical AI.

For instance, an AI algorithm that manipulates people in self-destructive behavior that is legal doesn’t represent ethical AI.

13. Hybrid AI

Hybrid AI is a combination of human insight and AI, such as machine learning and deep learning.

This type of AI is still in its early development phase and has a bunch of challenges. But experts still believe in it.

Another description of Hybrid AI is that it’s a combination of symbolic AI and non-symbolic AI.

A web search is a perfect example of Hybrid AI. Let’s say a user types “1 EUR to USD” into a search engine:

- The search engine identifies a currency-conversion problem in the search phrase. This is the symbolic AI part of the search engine.

- The search engine then runs machine learning algorithms to rank and present the search results. This is the non-symbolic part of the search engine.

14. Image Recognition

Image Recognition is a subfield of Computer Vision and AI.

Image Recognition represents methods used to detect and analyze images to automate tasks.

The state-of-the-art image recognition algorithms are capable of identifying people, places, objects, and other similar types of elements within an image or drawing. Besides, the algorithms are able to draw actionable conclusions from the detected objects.

15. Linear Algebra

Linear algebra is the key branch in mathematics when it comes to artificial intelligence and machine learning algorithms.

Linear algebra deals with linear equations, vector spaces, and matrices. Another way to put it is that linear algebra studies linear functions and vectors.

Although you traditionally use linear algebra to model natural phenomena, it’s the key component of making machine learning algorithms work.

16. Machine Learning

Machine Learning is one of the most well-known subfields of AI.

Machine learning is a field of study that focuses on using data and algorithms to simulate human learning. Machine learning programs rely on big data to learn patterns and relationships in them.

Behind the scenes, machine learning is nothing but a lot of basic linear algebra.

The simplest form of machine learning algorithm takes data and fits a curve to it to predict future values.

The process of the machine learning algorithm can be broken into three key parts:

- Decision process. The algorithm makes a prediction or classification. Using input data the algorithm produces an estimate of patterns in the data.

- Error function. The error function considers the “goodness” of the prediction made by the model. If you have known examples, the error function can compare the prediction to these examples to assess the accuracy.

- Model optimization process. Depending on the outcome of the prediction made by the algorithm, you might need to adjust it. This is to reduce the error produced by the algorithm when comparing a prediction and a real example.

17. MidJourney

MidJourney is a new AI art generator that turns text into an image. And not just any image, but a realistic, creative, or abstract masterpiece unlike we’ve never seen before!

MidJourney has become viral in the recent past thanks to its amazing AI art creations.

To use MidJourney, give it a text input from your deepest imaginations and it will turn it into an image.

Notice that at the time of writing the tool is still in beta mode and accessible through invitation.

MidJourney represents the new wave of AI text-to-image software. MidJourney has already won a real art contest!

But MidJourney is definitely not the only powerful AI art tool. Other similar solutions, such as DALL-E and Stable Diffusion are also making their way to the top of the game.

The best part about these AI art tools is that they are evolving so rapidly. You can expect them to only get better over time.

Make sure to check the best AI art generators—you’ll be impressed!

18. Model

Model is a commonly used term in machine learning—the key subfield of AI.

A model or machine learning model is a file that recognizes specific types of patterns from data. To create a machine learning model, you have to write an algorithm and train the model with it and big data.

The machine learning model learns from data and you can use the model to predict outcomes of future values.

For instance, you could make a machine learning model that predicts the highest temperature of the day given the temperature in the morning. You could train the algorithm with a linear regression model and a bunch of weather data points from the past.

19. Natural Language Processing

Natural Language Processing (NLP) is one of the key subfields of AI. With NLP, a computer program can process text and spoken language in a similar fashion to us humans.

NLP makes it possible for humans to interact with computers using natural language.

Natural Language Processing combines:

- Computational linguistics

- Statistical modeling

- Machine learning models

- Deep learning models

When used in a clever way, a combination of these studies give rise to computer programs that are capable of understanding the full meaning of the text or spoken language. This means the programs can sense the intention and sentiment too!

NLP is useful in translations, responding to voice commands, text summarization, and more.

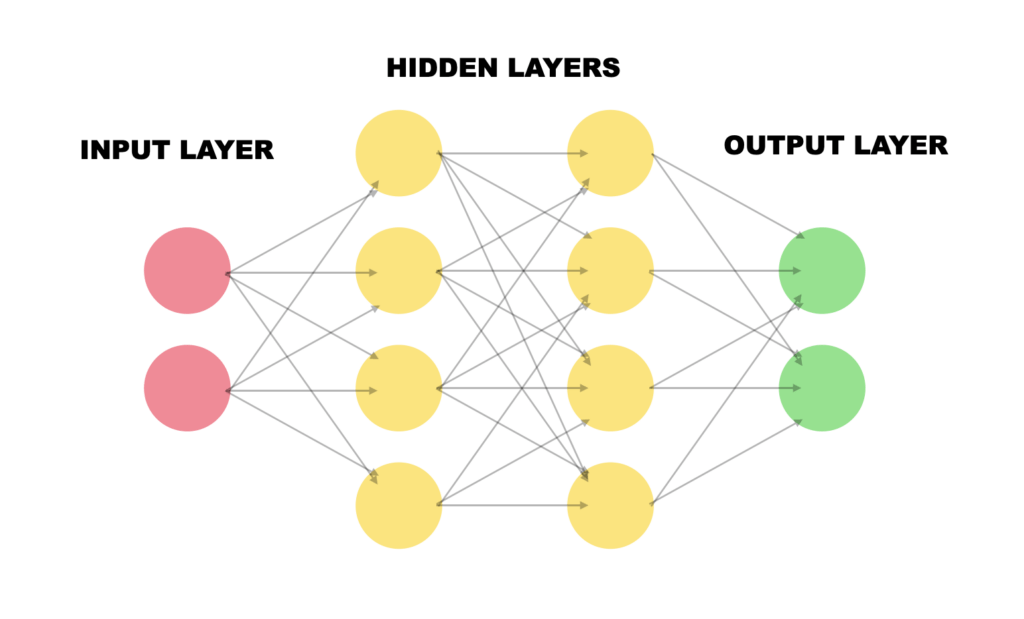

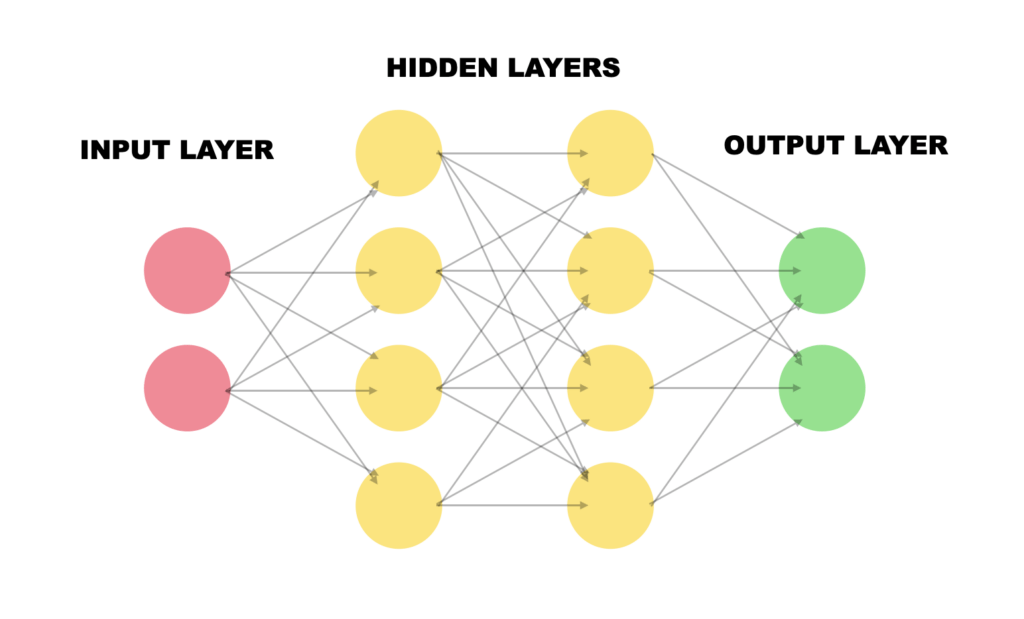

20. Neural Network

A neural network is a subfield of deep learning, which is one of the key subfields of AI.

A neural network mimics the brain’s pattern recognition process. It’s a simulation of how biological neurons communicate in the human brain.

A neural network is formed by:

- An input layer

- One (or more) hidden layer

- An output layer

The layers consist of nodes (artificial neurons). The previous layer’s nodes are connected to the nodes in the next layer. Besides, each node carries a weight and a threshold.

If the output of a node exceeds the threshold, the node is activated and it sends data to the next layer.

This is similar to how the neural activity in our brain.

When building a deep learning algorithm, a developer runs the training data through a neural network. The algorithm then learns to classify the training data based on neural network activations.

21. OpenAI

OpenAI is an AI research lab and company.

OpenAI is one of the key players in the field. The company has developed a bunch of AI-powered programs and algorithms unlike seen before.

The two best-known examples of OpenAI’s AI algorithms are:

- DALL-E. This AI algorithm allows for generating outstanding images based on text input.

- GPT-3. This cutting-edge natural language processing model allows for creating human-like text based on a short input. It can write news, poems, short stories, or even books.

The best part of these AI models is that they are available to the general public.

Anyone can access GPT-3 and develop a super powerful text-generation app with a few lines of code. This happens through the GPT-3 API that OpenAI provides.

At the time of writing, DALL-E is not yet in general availability, but it is accessible via a waiting list and an invitation.

22. Optical Character Recognition

Optical character recognition or OCR (text recognition) extracts textual data from images, scanned documents, and PDFs.

The OCR algorithms work by:

- Identifying all the letters in an image.

- Putting the letters to words.

- Putting the words into sentences.

OCR can use AI to create even more impressive methods for extracting text from images. Sometimes the AI-powered OCR is called ICR for Intelligent Character Recognition.

ICR can identify languages from writing or extract information in different styles of handwriting.

A typical OCR software helps save time and cut costs when it comes to turning physical documents into digital format. Instead of doing the work manually, you can take a picture and use OCR to scan its contents.

Make sure to read my article about the best OCR software.

23. Prompt Engineering

Prompt engineering is a relatively new term in the field of AI.

Prompt engineering refers to writing carefully thought text inputs for AI algorithms to generate the desired outcome.

The term prompt engineering is relevant when using:

- AI text-to-image generators

- AI text generators

For example, an AI text-to-image generator might produce rather unimpressive results if the text input is not descriptive and specific enough.

To use AI to generate impressive images, videos, or text, you need to be careful when giving input.

For instance, you might need to drop a name of an artist, era, painting style, and such. You also might need to insert some technical terminology in the prompt to give the imagery a specific type of look.

Prompt engineering might be the career of the future.

24. Singularity

In the field of AI, singularity refers to the event where the AI becomes self-aware and starts to evolve on its own out of control.

But don’t worry just yet. The modern-day AI isn’t very smart compared to the human brain.

Even though singularity is not a reasonable present concern, it’s definitely something we have to be careful about in the future. The rapid developments in computing and technology might make AI dangerous or destructive.

The developments of AI must focus on making AI our friend, not our enemy.

25. Speech Recognition

Speech recognition is a popular application of artificial intelligence.

The idea of speech recognition or automatic speech recognition (ASR) is for a computer program to pick up on the spoken words and turn them into text.

Many speech recognition services use AI algorithms to process spoken language. They utilize the composition of audio and voice signals to process speech.

There are many speech recognition algorithms, including natural language processing techniques and neural networks.

The ideal AI-based speech recognition system learns as it goes. This makes the tools more accurate over time.

26. Strong AI

Strong AI is a theoretical level of artificial intelligence where the AI is as intelligent as humans and is self-aware. Moreover, a strong AI system would be able to solve problems, learn new skills, and plan the future—like we humans do.

Strong AI is commonly known as artificial general intelligence or AGI for short.

It’s up for debate if we’ll ever reach this level of AI. Some optimistic researchers claim this type of AI is not further than a couple of decades away from us. Others say it will never be achieved. Only time will tell.

27. Turing Test

The turning test is a test that determines whether a machine has human-like intelligence.

If a machine can have a conversation with a human without being detected as a machine, the machine has passed the Turing test—or shown human intelligence.

The Turing test was proposed by Alan Turing back in 1950.

Even with the impressive and rapid developments in the field of AI, no machine has ever passed a Turing test. But it’s getting closer.

The main motivation and theories of AI all evolve around the concept of passing a Turing test. This is why you commonly hear people talking about the Turing test or passing it.

28. Weak AI

Weak AI is an approach to AI research and development in which AI is considered to only be capable of simulating the human intelligence process.

Weak AI systems aren’t actually conscious.

Weak AI is bound by the rules developed for it and cannot autonomously go beyond those rules.

A great example of weak AI is a chatbot. It appears to be conscious and answers intelligently. But it cannot go beyond that.

As a matter of fact, all modern-day AI solutions are examples of weak AI.

Weak AI is also commonly called narrow artificial intelligence.

Conclusion

So there you have it—a whole bunch of AI jargon you might hear every day!

To put it short, AI or artificial intelligence is a rapidly developing subfield of computer science. AI is already used in impressive applications and it can carry out tasks unlike ever before. Only time will tell what the future holds for AI and us living with it.

Thanks for reading!