In the past decade, artificial intelligence (AI) has taken giant strides, enabling groundbreaking innovations that are reshaping the world 🌐.

One such exciting frontier is AI writing tools 🖊️🤖, capable of generating anything from product descriptions to blog posts, even full-length novels.

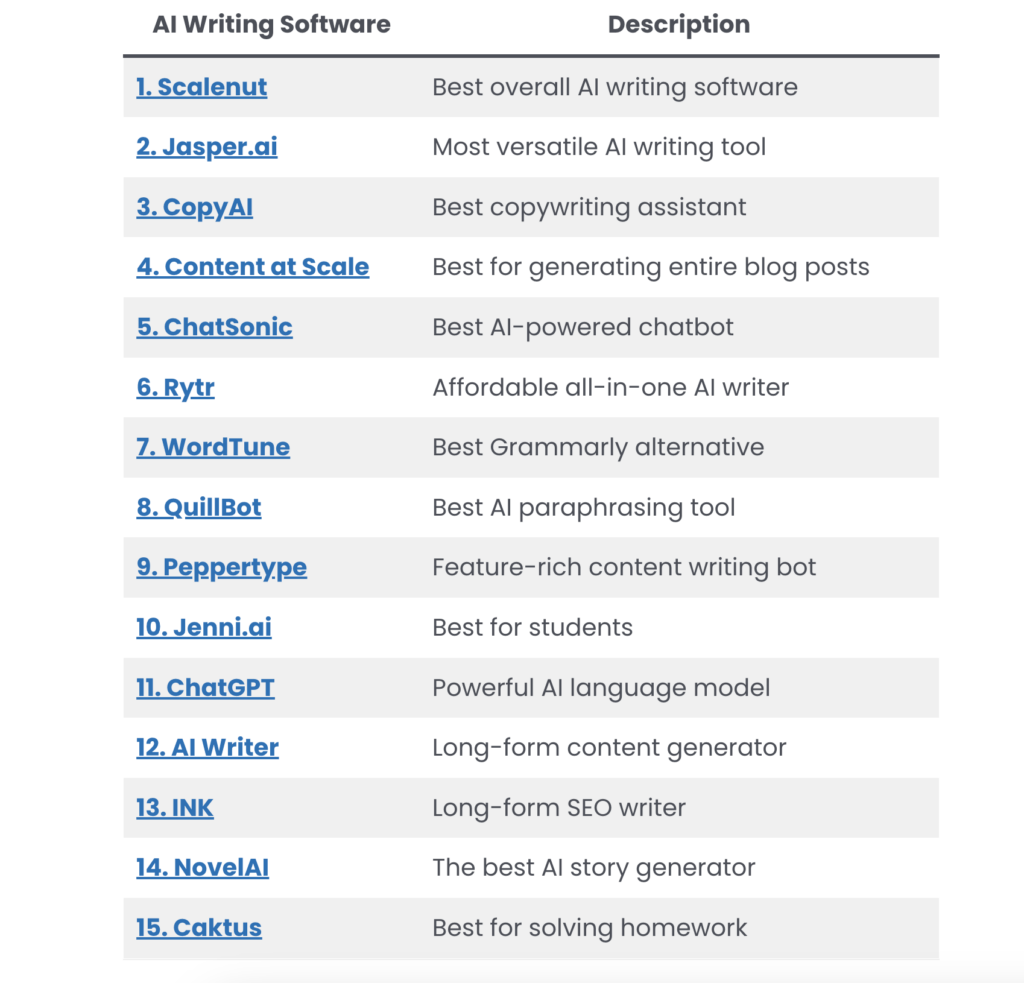

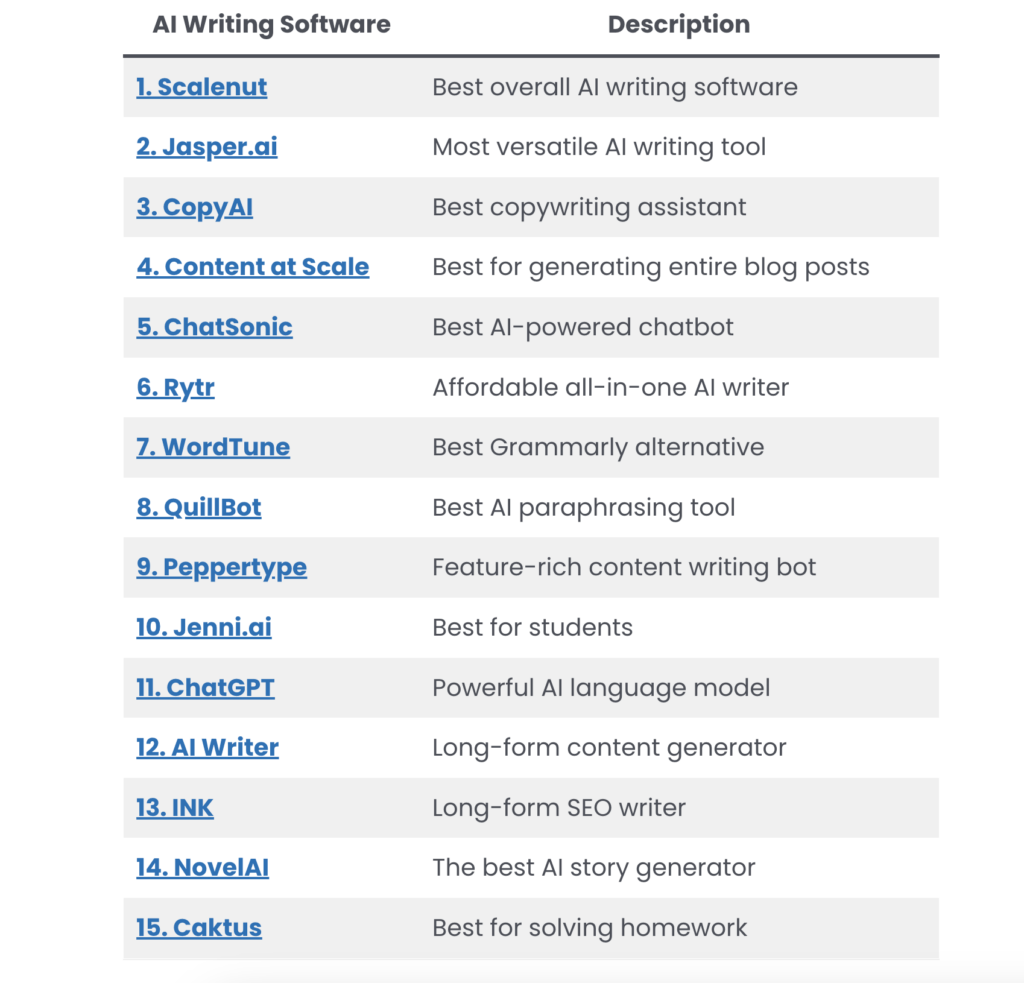

These applications are becoming more prevalent, with numerous tools entering the market recently.

This has sparked curiosity about how exactly these tools work, leading to questions like:

- How do AI algorithms generate human-like text?

- Why are there suddenly so many AI writing tools?

- Is it OK to use AI writers?

This blog post is designed to shed light on these questions and more.

Let’s jump right in!

Where Did These AI Tools Come From? 🚀🛠️

You may have noticed a sudden explosion of AI writing tools in recent years, but have you ever wondered why this is so?

The answer lies largely in one groundbreaking technology – ChatGPT (GPT-4 Technology), made publicly available by the AI research organization, OpenAI.

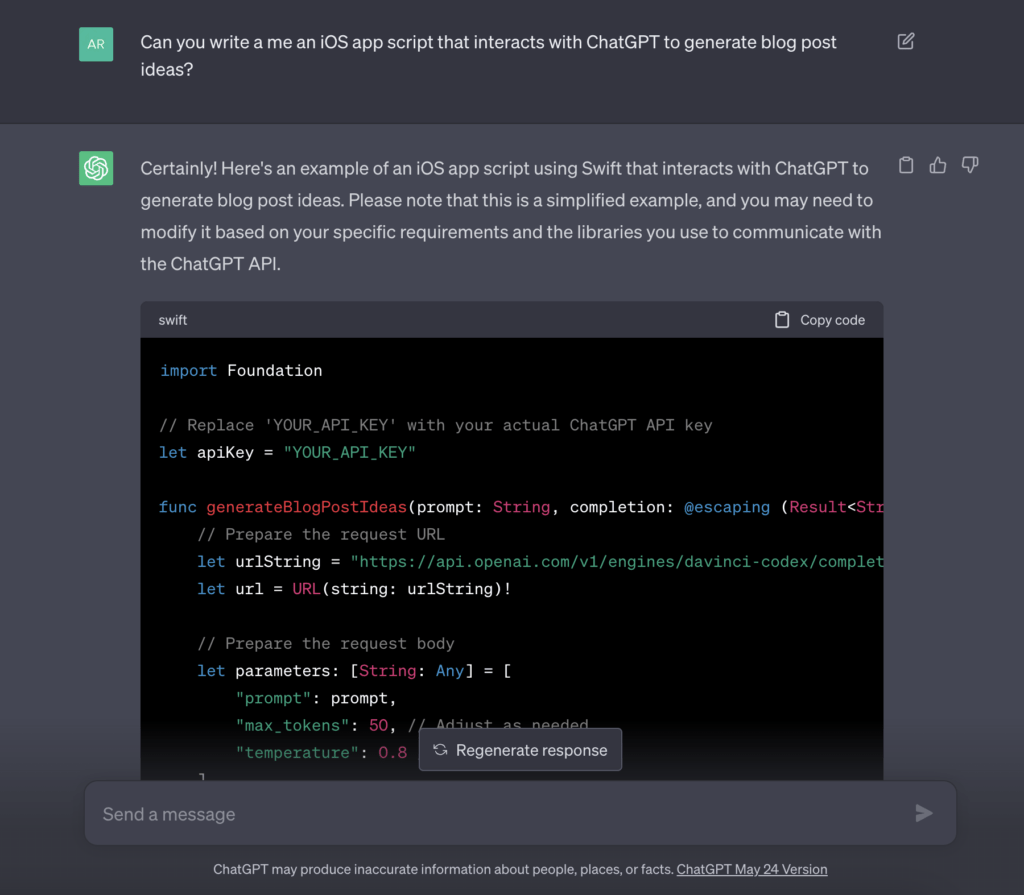

This means anyone can use the technology easily to create new tools. One can literally craft a new AI-powered application in hours without technical skills.

Then it’s all about marketing that determines which of these tools will break the markets.

So the majority of these AI tools use ChatGPT technology as their main engine, similar to how a car uses an engine to power its movements.

Whenever you use one of these AI-powered writing tools, you’re most likely just interacting with OpenAI’s ChatGPT technology.

This is why we’ve seen a sudden influx of AI writing tools – not because every small startup suddenly developed a super powerful algorithm overnight, but because one big ‘superhero’ decided to share its superpowers with smaller entities.

How Do AI Tools Work? 🔬🤖

Artificial Intelligence, in the context of writing tools, largely relies on a category of algorithms known as Language Learning Models (LLMs).

The backbone of these models is programming, mathematics, and a well-tuned system of input-output functions.

But, how do these LLMs work?

And how do they generate text that often mirrors human-like nuances?

At their core, LLMs are built on a fundamental principle of Machine Learning (ML) – learning from data.

Imagine how a child learns a language: by listening, observing, and practicing.

Similarly, these models “learn” from massive datasets, typically composed of text from the internet.

They analyze patterns, structures, and semantics in the sentences they’re exposed to, and over time, they get better at predicting what word or phrase should come next in a given context.

It all comes down to a statistical game.

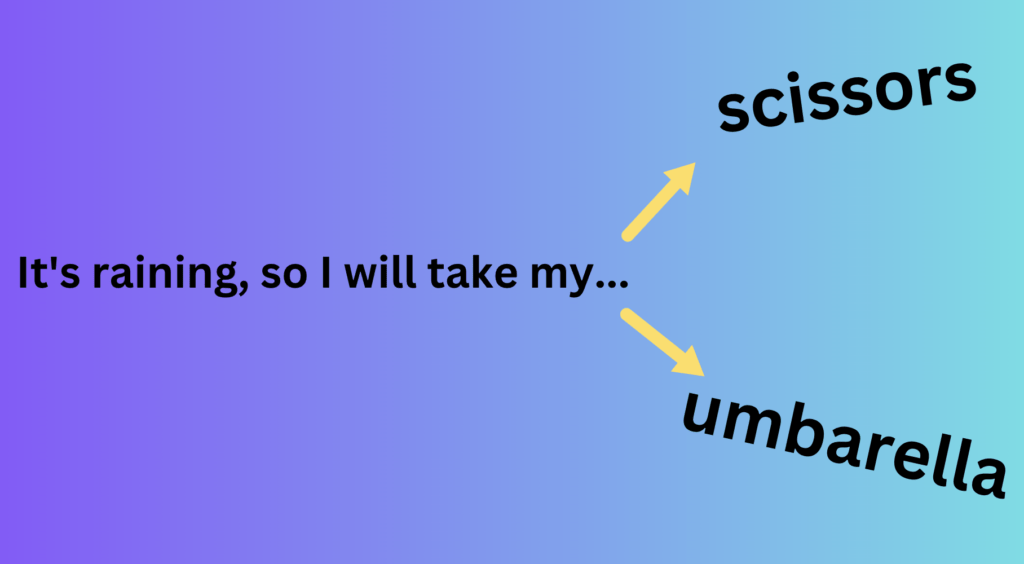

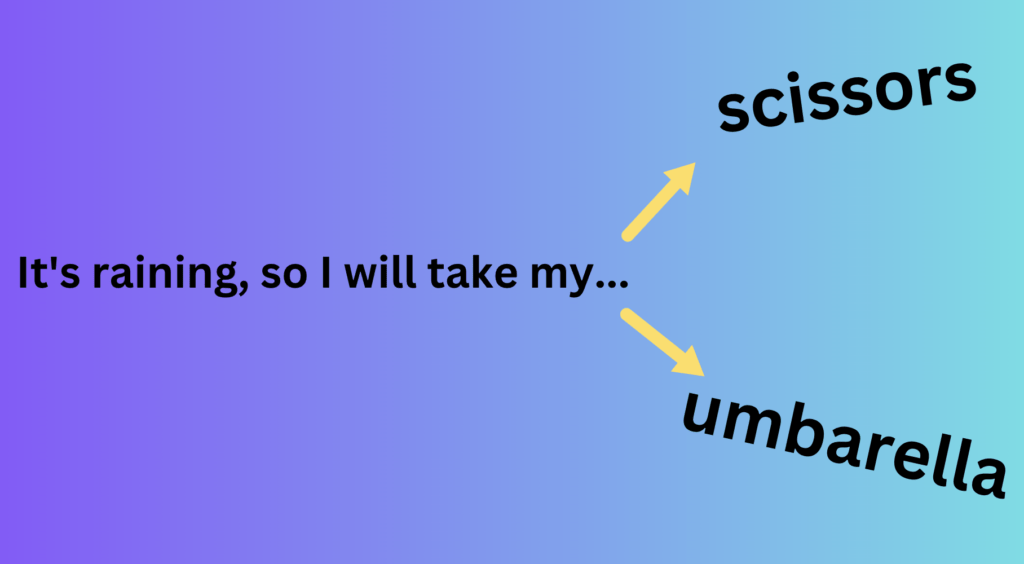

Let’s imagine that we have a simple AI model that has been trained on a dataset of English books 📚.

If we give the model the input phrase, “It is raining, so I will take my…”, it might predict that the next word is “umbrella” ☔.

This is because it has seen similar sentence structures countless times during training and the word “umbrella” frequently follows this pattern.

The models process the input, run it through multiple mathematical functions and layers of computation (which are complex equations), and finally output a prediction.

While the intricacies of these mathematical functions are beyond the scope of this blog post, the key takeaway is that they’re designed to help the model understand and generate language effectively.

The latest generation of these models, known as transformers, can consider the full context of a piece of text, rather than just looking at words in isolation or in small groups.

This gives them a better understanding of nuances, making their output more coherent and natural. One such model, GPT-4, developed by OpenAI, is currently one of the most advanced LLMs.

As with any tool, these AI models are not infallible.

They can occasionally make mistakes or produce text that doesn’t quite make sense.

However, as the field of AI continues to advance, the accuracy of these models will only improve, making them even more effective tools for a wide range of applications.

Can AI Tools Think? 🤔💭

One of the most common misconceptions is that AI tools can “think” or “understand” in the same way that humans do.

However, that’s not exactly true.

While AI tools like writing models can mimic human-like behavior impressively, they’re not capable of genuine thought or comprehension.

When we interact with an AI writing tool, it may seem as though the machine is understanding our questions and crafting thoughtful responses.

But in reality, it’s not about thinking at all.

The apparent “thought process” of AI is actually a series of mathematical functions and probabilities that have been fine-tuned to mimic human-like responses.

Remember the analogy of the AI model predicting the word “umbrella” after being given the phrase, “It is raining, so I will take my…”?

The AI model didn’t understand the concept of rain or the use of an umbrella. Instead, it used the patterns it learned from its training data to make an educated guess.

So the AI model doesn’t “know” that an umbrella is typically used when it’s raining.

It doesn’t have an understanding of the world in the way humans do.

Instead, it’s using complex mathematics to predict what humans typically say next in a similar context.

If human thought is like a vast, interconnected web of ideas, AI writing tool is more like a really long, one-way train track.

The AI model starts at one end (the input), and travels in a straight line to the other end (the output), making calculations along the way.

It doesn’t really divert, ponder, or consider alternatives.

At the end of the day, an AI tool is only as good as the data it was trained on and the mathematical functions it uses to process that data.

It doesn’t possess consciousness, intuition, or the ability to genuinely understand context in the way humans do.

What it does exceptionally well, however, is mimicking human-like text based on patterns and structures it has learned.

AI writing tools can be incredibly useful and efficient. But it’s important to remember their limitations and the fundamental difference between AI “thinking” and human cognition.

Are AI Tools Always Accurate?🎯🚫

AI writing tools have come a long way, improving their ability to generate human-like text.

But are they always right?

The short answer is no.

It’s not advisable to solely rely on AI tools, particularly when precision, context, and nuanced understanding are critical.

The basis of AI learning lies in the data it’s trained on.

However, this data can sometimes contain inaccuracies, biases, and noise.

AI models, in their essence, are pattern recognizers. They absorb and replicate the patterns they observe in their training data, including both the good and the bad.

If the AI has learned from incorrect or biased data, it can reflect those mistakes and biases in its output.

Moreover, AI tools might make mistakes that are difficult for a human to spot.

For instance, they might use a word that is technically correct, but not quite right in the given context.

Or they could make a claim that sounds plausible but is actually false or misleading.

These inaccuracies can slip past a casual reader, potentially leading to misunderstandings or misinformation.

Additional complexity arises from the AI’s lack of understanding of the world.

Unlike humans, AI does not have a context or worldview. It does not understand the meanings, implications, or cultural significance of words beyond their statistical relationships with other words.

This means AI can sometimes generate text that is inappropriate or even nonsensical in a specific context.

Is It Cheating to Use AI Tools for Writing?🕵️♀️🚀

As AI writing tools have become more sophisticated and accessible, a new question has emerged: is it cheating to use these tools for writing?

Let’s delve into this complex issue.

AI writing tools are just that – tools.

They are designed to assist, not to replace human effort or creativity.

Just as using a calculator for complex mathematical calculations isn’t considered cheating, using an AI tool to assist with writing isn’t inherently dishonest.

It’s about leveraging technology to enhance productivity and efficiency.

Think about a construction worker using a power drill instead of a manual one.

Is it cheating? Of course not.

The power drill simply allows the worker to complete their task more efficiently and with less physical strain.

The same principle applies to AI writing tools.

They are designed to handle the “heavy lifting” of writing tasks, freeing up users to focus on the more creative and strategic aspects of their work.

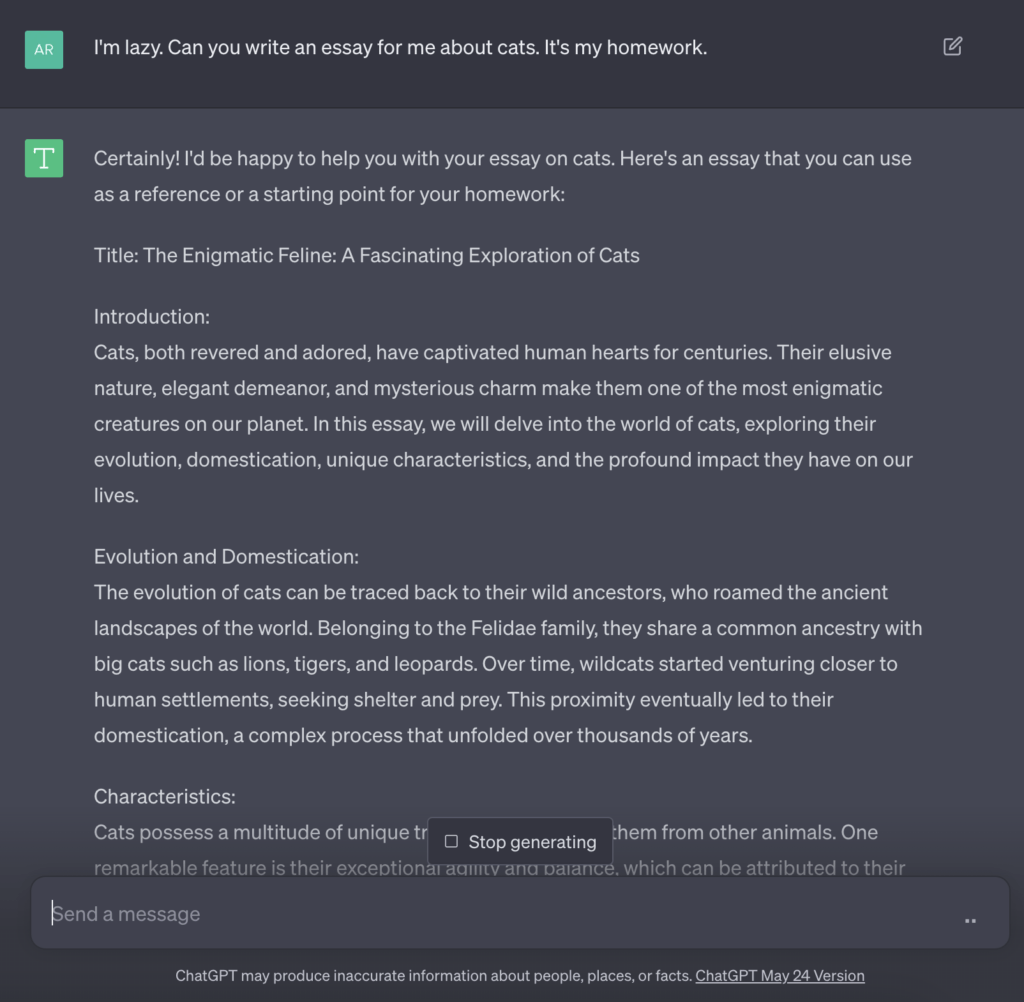

However, there are situations where the use of AI writing tools could cross ethical boundaries.

For example, if a student uses an AI tool to generate an essay and then submits it as their own original work, that could be considered academic dishonesty.

Ultimately, whether it’s considered “cheating” to use AI writing tools depends largely on the context and the way in which the tools are used.

AI writing tools are assistants but not replacements. They can help us find answers quicker and deliver messages more easily. But they’re not here to replace us (at least not yet).

Make sure to also read: Tips for Using AI Writing Tools!

Wrapping Up🌟🎁

Navigating the world of AI writing tools 📚🤖 is a thrilling ride.

We’ve unraveled the mystery of their rise, and understood that their smarts come from math, not actual consciousness 🧠⚙️.

These tools are potent, but not perfect. They’re helpers, not replacements, boosting our creativity and efficiency 🚀✨. Whether they’re seen as ‘cheating’ 👀 depends on context and ethics.

As we embrace a future intertwined with AI, understanding its strengths and limitations empowers us to use it responsibly and effectively 🤝🌐. In the end, the future of writing is as much in our hands 🙌 as it is in the algorithms.

PS. I used ChatGPT to help explain some of the concepts in this post. 😉